How to Become Smarter and More Rational (Yes, You Too)

We need to chat about some of the silly things you and I do every day.

And, look, we’re smart people, which is why this is all hard to admit sometimes.

No one wants to acknowledge their flaws, their weaknesses, their shortcomings.

But… we have a lot of them. A LOT of them. We have so many of them that there’s a Wikipedia list of them.

These flaws, weaknesses, and shortcomings are your cognitive biases (okay, maybe you have other problems too but we’ll save those for later). The mental shortcuts and blind spots that lead us to make bad decisions, form bad beliefs, and not act in our best interest.

But here’s the good news!

You can mitigate your susceptibility to them. You can reduce the impact that these biases have on your decision making, and become smarter and more logical in the process.

And no, no one is “special” and unbiased. I’d go so far as to say that the more you think you’re unbiased, the more biased you actually are. It all comes back to the Bias Blind Spot where we think we’re less biased than other people. In the coming examples, I’m certain you’ll find at least one that you’ve done recently.

The fact of the matter is that we all need to work on reducing how much we’re affected by these biases on our own (because pointing them out to people in conversation is a great way to make them hate you), so let’s get started.

Reducing Your Cognitive Biases

Awareness is the most important factor for reducing the power your biases have over you.

This list is your new best friend. It’s Wikipedia’s index of the recorded cognitive biases, with short descriptions and links to their full descriptions.

Open it up, and start going through the biases one by one. See if you can think of an example where someone else demonstrated that bias in the last month. I say someone else because the Bias Blind Spot that I linked to earlier tells us that you’ll be bad at recognizing your own biases (at least for now).

Start doing this on a monthly basis, maybe even weekly in the beginning. The goal is to get to the point where as soon as you start thinking or acting based on one of these biases, you can catch it and change course. And also so that when someone else is arguing or acting from a clearly biased mindset, you can just smile and nod and quietly laugh (in your head, please) at how silly they’re being.

But trying to do everything at once isn’t the best course of action. Instead, let’s do a little triage.

Which Biases to Focus on First

Not all biases are created equal. There are some you’ll run across 10x more than the others, and those are the ones to make yourself most aware of.

There’s no official frequency list, but these are the ones that I see far and above the most:

- Sunk cost bias

- Confirmation bias

- Availability heuristic

- Impact bias

- Hindsight bias

- Semmelweis Reflex

- Survivorship Bias

- Actor-observer bias (or Fundamental attribution error)

Let’s run through them quickly and how you can root them out.

Sunk Cost Bias

Definition

The sunk cost bias explains we tend to weigh lost resources (time, money, energy) in a decision, even when those resources are gone regardless of the decision we make.

Examples

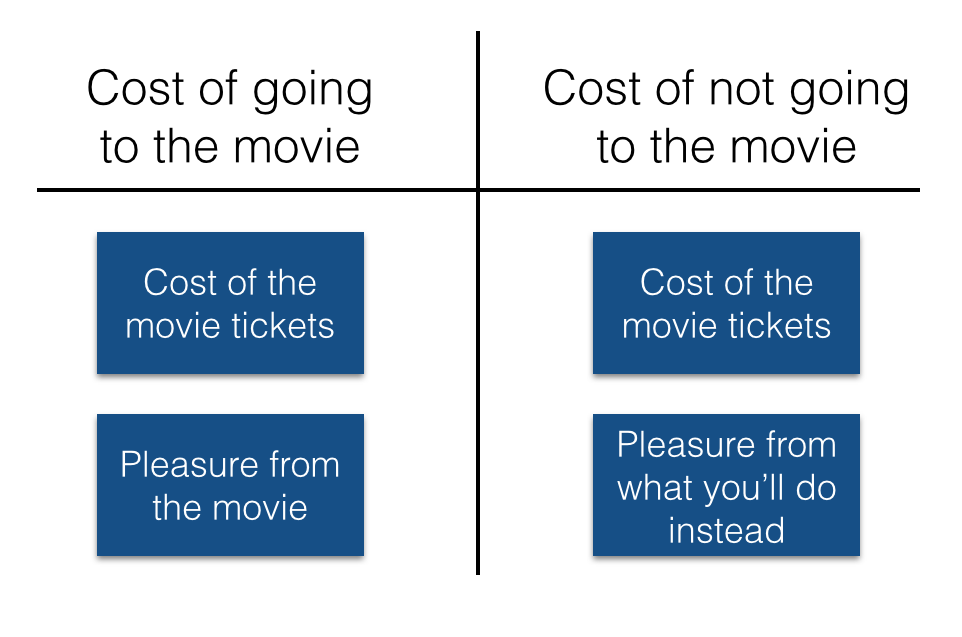

For example, let’s say you bought movie tickets for tonight. Whether or not you go to the movies, you are not getting that money back, so the fact that you spent money on it should not affect your decision. The only thing affecting your decision should be whether or not you still want to see the movie.

Or, say you’re debating ending a relationship. The fact that you’ve been in it for a year, two years, etc. shouldn’t affect your decision because either way you’re not getting that time back. It’s gone. The question is only if you want to spend one or two more years.

Avoiding it

You partially have your parents to blame for your susceptibility to this bias.

Imagine being a kid, convincing your parents to take you a theme park, and then deciding (after they bought the tickets) that you don’t want to go anymore. They would be pissed, right? You’d either be forced to go or get punished (unless you have unusually bias-aware parents).

So from a young age we have the idea that we “need” to follow through on past commitments (especially financial) drilled into us. But we don’t have to follow through on past commitments, and that mentality may well be part of the obesity problem (clean plate club be damned).

From now on, anything you buy is an option and not an obligation.

So that theme park ticket? It’s an option to go, not a forcing factor.

That year long relationship? You have the option to continue it, not the obligation.

Those kids you had? You have the option to keep feeding… nevermind, forget that one.

Confirmation Bias

Definition

The confirmation bias is our tendency to look for, remember, and believe information that supports our existing beliefs, and also to ignore, forget, and not believe information that goes against it.

Examples

Just look at social media:

- New age hippy healthy eaters posting pseudo-science supporting a vegan / paleo / whole foods diet

- Fat people posting about the media creating body-image issues and how everyone is healthy regardless of size

- Republicans posting articles that support less gun regulation

- Democrats posting articles supporting greater income redistribution

- Atheists posting about what they find ridiculous in religion

- Religious people posting stories about miracles / people talking to God

- Whites posting about not being racist

- One-percenters posting about trickle down economics

- Middle-class people posting about Wall Street corruption

- Have I offended everyone yet? Close enough, let’s move on.

Or, to find examples in yourself, look at your own sources of information. If you’re a democrat, do you read CATO articles? If you’re agnostic about GMOs, do you read raw food and vegan blogs? Probably not, since we tend to read information that supports something we already believe. Why? Because it makes us feel warm and fuzzy and like we know what we’re talking about.

Hell, I doubt that anyone reading this article at this point is surprised by any of this. All the people who “aren’t biased” (laughs) stopped reading a long time ago and went back to Buzzfeed.

Avoiding it

First, develop a good understanding of illusory correlation and the scientific method.

Then, avoid reading anything from a source that has one-sided skin in the game (either for or against the idea).

For example, a blog like “Mark’s Daily Apple,” while an excellent source for living a paleo life, is a terrible source for assessing whether or not Paleo is a good idea. Why? Because if he were to say “Paleo might be a bad idea” his whole business would be dead!

Similarly, don’t get critiques of Republicans from Republicans (or Democrats for that matter, it goes both ways), Christians from Priests, Prohibition from bartenders, etc. Before you listen to anyone’s opinion, ask whether they’re arguing something just to back up their own decisions or lifestyles.

When you come across anything that supports what you already believe, critique it just as hard as you would something you disagree with.

And, when possible, try to look for sources that contradict what you believe. Look at their arguments fairly, and see how they affect your beliefs.

Availability Heuristic

Definition

The availability heuristic tells us that we tend to believe things more based on the number of memories or examples we have, and how emotionally charged those memories are.

Examples

For a couple weeks, I thought fairly strongly that Bernie Sanders was seriously competing with Clinton for the Democratic nomination. This belief mostly came from two or three friends dominating my Facebook newsfeed with articles about him.

It was only a few days ago that I looked it up and found out that he only has ~11% of the vote versus her ~60% (if you’re reading this and Sanders is president, well, joke’s on me).

Simply seeing a bunch of articles about him, and how he’s doing “well,” made me believe something that I had no evidence for.

Avoiding it

The easiest first step is to avoid the news and any source of information that needs to fill time and space to stay in business. It will end up repeating stories frequently, and the more you hear them, they more you’ll believe them.

When you’re making a big decision, get equal amounts of information for both sides. Researching one side more will make you believe it more since there will be more examples and arguments for it available to your mind.

In general, ignore single instances of data, and look for trends instead.

Impact Bias

Definition

The impact bias tells us that we tend to overestimate the length and intensity of our emotional reactions to changes in our lives, good or bad.

Examples

For example, when you buy a new car, it’s great for a little while, but then it’s just the car you drive to work.

Or, maybe you’ve had the experience of going on vacation and it being amazing for the first few days or week, and then towards the end everything is just normal and you’re not impressed by it anymore.

Or, maybe you thought that ending that relationship or not getting that job / college would be completely emotionally devastating, but then got over it in a few weeks.

When bad things happen, our minds are great at coping with short-term emotional traumas. We get over them quicker than we anticipate.

When good things happen, our minds are again adjusting to new circumstances, and we’re good at it. Emotional deviations from the norm are more fleeting than we expect.

Avoiding it

When something bad happens, or when you’re worried about something bad happening, don’t imagine how long you think it’s going to affect you, think about how long something similar affected you in the past. When you look back, you’ll realize that you weren’t as emotionally destroyed as you expected.

For good changes, remember that you’re going to adjust to everything quickly. That means that instead of making huge purchases or shifts (expensive cars, houses), you would create greater long-term happiness my making many small improvements. That way you’re always getting the short spike in happiness, and not spending as much time being used to what you already have.

Remember that you’re going to quickly adjust to any change in your life. So if you’re happy where you are, quitting your job and moving abroad won’t make you happier for long. Nor will getting a bigger house. Or a nicer car.

But ditching situations which perpetually create unhappiness (bad relationships, jobs you hate, cars that keep breaking down, school, etc.) will bring you sustained higher happiness. Don’t focus on improving your upside, but instead on minimizing your downside.

Hindsight Bias

Definition

The easiest way to think of the hindsight bias is as the “knew it all along” effect. Anytime someone (or you) claims to have known something all along… they (or you) most likely didn’t.

Examples

Whenever someone (or you) says something to the effect of “I really should have invested in that stock / not gone out with that girl / discovered The Secret sooner” they’re committing the hindsight bias (or lunacy in the third case).

We mostly do this to make ourselves feel like we’re not stupid. No one wants to admit that they had no idea that something was going to be a good / bad idea, so we tell these stories to make ourselves feel better.

I would, of all the ones on this list, particularly recommend you don’t point this one out to other people. No one wants to hear “come on, you had no idea that guy was going to want you to dress up like a horse and neigh for him, get over it, you screwed up” Just let them live in their fantasy world where they were able to predict the future and chose not to.

Avoiding it

Avoiding it is simple. Just accept that we suck at predicting the future and that we don’t need to prove how smart we are to other people.

Recognize that when you take an “I knew it all along” stance, you deny yourself the ability to learn from your mistakes. And if you’re not learning from your mistakes, you’re bound to repeat them. Accept that you had no idea how the future was going to turn out! Now you know not to go home with guys wearing spurs, leather jackets, and carrying a saddle around.

Semmelweis Reflex

Definition

The Semmelweis reflex is anytime someone reacts to new information by immediately dismissing it because it contradicts an existing paradigm. This is especially common if believing it requires the believer to accept that they’ve been making some sort of error for a long period of time.

It’s called the Semmelweis Reflex because Ignaz Semmelweis figured out that doctors were killing new mothers by not washing their hands after handling cadavers. He went to hospitals to tell them, and thankfully, they realized their error and changed their policies.

Just kidding.

They laughed him out of the building, had him declared insane, and he was eventually beaten to death in an asylum.

Why didn’t doctors believe him? Because if they did then they’d be admitting that their mistakes had killed countless mothers. It was too much cognitive dissonance for doctors to handle, so they killed the messenger (and many more mothers).

Birth is messy anyway, why wash our hands first?

Examples

The most common examples, in my experience, are in health.

If you tell someone that not eating for a few days is healthy, they’ll assume you’re anorexic or crazy.

People are starting to realize that eating fat doesn’t necessarily make you fat, and is actually the best macronutrient for burning fat, but I still get weird looks about this.

Or try telling a Democrat / Republican that people who subscribe to the opposite methodology are just as rational as they are. You’ll probably get laughed at (surely if they were logical they’d agree with your philosophy…)

You’ll see it any time you try to tell someone something that would mean they had been living a contradiction for any significant period of time. We have a strong aversion to cognitive dissonance and so it’s easier to ignore the message than accept we might have been screwing up.

Avoiding it

If you’re reading this, then you shouldn’t have too much problem avoiding it. You recognize to a certain extent that you’re plagued with these biases and will be more aware of them kicking in.

But a mindset that helps is to assume that you don’t fully know anything (a la Socrates). When it comes to health, politics, economics, business, etc. assume that you have an incomplete understanding of whatever it is and that you should receive new information with open, yet skeptical, arms.

Life is not like politics, flip-flopping is perfectly okay and is exactly what you should do in the face of new information. Don’t stick to your guns out of pride, admit that you were wrong, move on, and be happy with your improved understanding.

Survivorship Bias

Definition

The survivorship bias is when we assume that someone who has accomplished something has done so because they’re special, talented, hard working, etc. instead of considering that they might just be lucky, or at least largely lucky.

Examples

You see this in startups when someone gains massive respect for being involved in a successful company, regardless of how much of a role they played in its success

Or let’s say that someone eats a bunch of carrots and their cancer goes away. Does that mean carrots cure cancer? No, because all the people who drank carrot juice and died aren’t here to tell us about it. This is closely related to the causation / correlation confusion.

Or, hey, Asians are good at math right? No, just the ones who worked hard enough to make it into top US schools. You’re ignoring the hundreds of millions who have no respectable math skills at all. (This is also related to base-rate neglect).

Avoiding it

If you want to avoid being affected by the survivorship bias, always be asking “what about the opposite?”

Look at whatever claim is being made, and think about how the opposite could be true.

- Could there be people who were involved in a successful company that were useless? Certainly.

- Could there be people who drank a bunch of carrot juice and still died of cancer? Certainly.

- Could there be Asians who are bad at math? Certainly.

You’ll frequently find that not only is the opposite potentially true, but it’s more likely true, and the original claim is just a biased misunderstanding.

Actor-Observer Bias

Definition

The actor-observer bias, or fundamental attribution error, is when we assign our own shortcomings to our situation and assign others’ shortcomings to an aspect of their personality.

Examples

Let’s say you’re driving along. The person in front of you suddenly slows down and turns right, without signaling. “What an asshole! Clearly they can’t drive. They should get off the road. God I hate people who are like that. How did they get a license?!”

Then, suddenly, you realize you’re about to miss your turn. You slam on the brakes and turn without signaling. The guy behind you honks at you, and you say “yeah, yeah, but I was distracted, it happens, I don’t normally do that.”

See the contradiction? When someone else screws up or does something stupid, we assume that’s part of who they are, and not a result of their situation. But when WE screw up, it’s just situational and isn’t a reflection on who we are.

Usually, though, it’s the latter that’s true: people make mistakes and it’s most likely part of their situation, not their personality. Tons of mistakes and errors might be a sign of personal weakness, but one data point isn’t enough to make those claims.

Anyone who says homeless people are just lazy would certainly blame the government if they themselves ended up on the streets.

Avoiding it

When someone screws up or misbehaves, assume it has something to do with their situation and not with who they are.

Someone completely losing their shit and yelling at you probably got fired / in a fight with their spouse / failed an exam. It’s a reaction to their situation, so don’t take it personally.

Now, this is hard to do. You’ll have to fight your impulses to get pissed at people. But if you do you’ll feel better when people do inevitably screw up, and you in turn won’t be as frustrated with them.

This doesn’t mean forgive everything though. Obviously repeat offenders are assholes and not just in a bad situation. But if you only have one or two interactions to go off of… you don’t have enough data.

Getting Started

Don’t try to fix all of these biases at once. Like I said, go through the list of biaseseach month and see which ones you still need to work on.

And don’t beat yourself up when you find yourself succumbing to one. It happens all the time. The good thing is that you recognized it so you can be more aware of similar situations in the future.

And get in the habit of recognizing them now. What’s one situation in the last week where you were victim to one of these biases? Let me know in the comments.